SEO experts, competitive analysts, and digital marketers all need reliable search data to track rankings, ads, and market trends. But today’s Search Engine Results Pages (SERPs) are personalised, location-based, and protected by strong anti-bot systems, making it hard to get clean, unbiased results.

That’s why a strong Serp Scraping Proxy setup can keep your scraping smooth, stable, and anonymous, even when search engines tighten their defences. Through this blog, 9Proxy will explain what this technology is, why it’s different from normal proxies, the best providers in 2026, and how to build your own scraper step by step.

What Is a SERP Scraping Proxy?

A Serp Scraping Proxy is an intermediary server designed specifically to help you collect data from Search Engine Results Pages safely and accurately. Instead of your scraper sending requests directly to Google or Bing, the proxy sends them on your behalf, hiding your real IP and location.

This masking lets you view SERPs the way real users in different cities or countries see them. A Serp Scraping Proxy also uses large, rotating pools of trusted IPs to avoid blocks, reduce CAPTCHA, and keep your scraper stable while gathering rankings, ads, and other search data at scale.

Why Use Proxies for SERP Scraping?

Search engines use strong anti-bot systems to spot automated traffic and protect their data, so scraping at scale will fail almost immediately without a Serp Scraping Proxy. Proxies are essential because they keep your SERP data accurate, stable, and scalable in several key ways. In many advanced setups, they are combined with a web scraping proxy approach to improve request distribution and avoid detection patterns.

Bypass IP Restrictions and Blocks: Proxies rotate IPs for every request, so no single address sends too much traffic. This helps you avoid rate limits, temporary blocks, and long-term IP bans.

Handle CAPTCHA: When search engines suspect bots, they trigger CAPTCHA challenges. A strong Serp Scraping Proxy setup can switch to a fresh IP the moment a CAPTCHA appears, allowing your scraper to continue smoothly.

Achieve Geo-Targeting: Search engines personalise results by location. Proxies let you choose IPs from specific countries or cities, ensuring the SERP data you collect matches what real users in that region would see.

Maintain Scalability: Proxies spread your requests across large IP pools, making it possible to run high-volume SERP scraping without overloading your system or getting blocked.

Together with good scraping logic, this setup gives you accurate, repeatable SERP data instead of noisy or blocked results.

Types of SERP Scraping Proxy

When choosing a Serp Scraping Proxy, the quality and source of the IP are the most important factors. The right choice depends on your budget, the volume of data required, and the specific search engine you are targeting.

We must understand the pros and cons of each common proxy type to select the right tool for our scraping project, ensuring a balance between anonymity and cost.

| Proxy Type | Source/Location | Anonymity Level | Best for SERP Scraping |

| Residential Proxies | Real ISP IPs assigned to households. | Very High | Geo-targeting, large-scale Google/Bing scraping. |

| Data Centre Proxies | IPs from cloud providers/servers. | Medium | Fast, high-volume, non-sensitive search engines. |

| Mobile Proxies | IPs from 3G/4G/5G mobile carriers. | Top Tier | High-security targets, mobile SERP tracking. |

| ISP Proxies | Static residential IPs hosted on servers. | High | Long-term sessions, stable geo-location (less rotation). |

Residential proxies are usually the safest option for heavy scraping because they look like real user traffic, which makes them much harder for search engines to detect. Data Centre proxies are faster and cheaper, but their lower anonymity often causes more blocks on major search engines.

Top 6 SERP Scraping Proxy Providers (2026)

To help you choose a dependable service, we reviewed the top providers in the market by looking at their IP quality, pool size, features, and overall performance in SERP scraping. Below is a list of six trusted options that users rely on in 2026, each offering its own strengths and trade-offs.

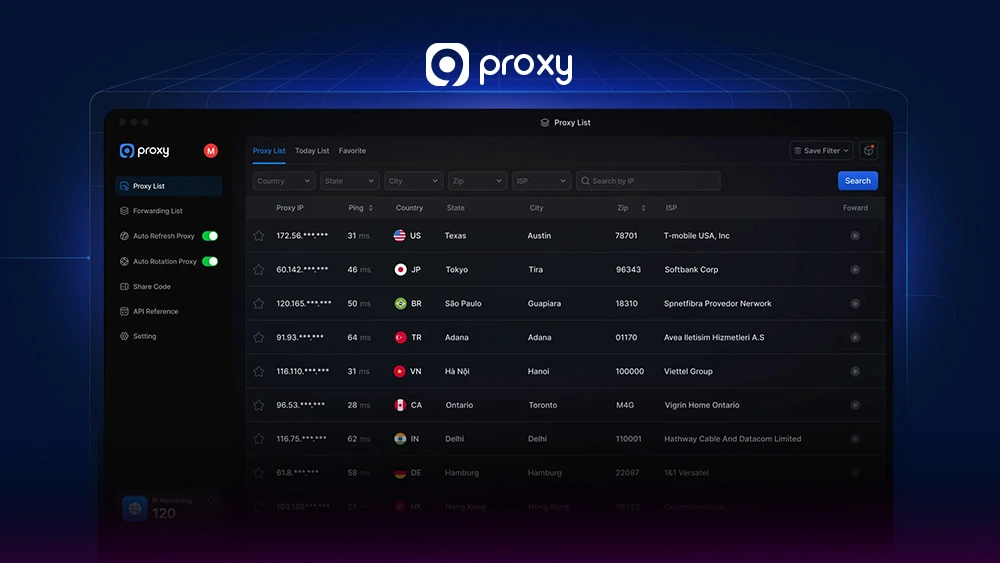

9Proxy

9Proxy provides high-quality, ethically sourced Residential IPs, making us a strong choice for sensitive scraping tasks that require safe, reliable performance. We focus on delivering clean, fast proxies that work well with complex geo-targeting needs on major search engines.

- Pros: 100% real Residential and Mobile IPs, clear pricing, accurate geo-targeting, and strong IP reputation.

- Cons: We mainly offer rotating IPs, with less focus on static ISP options (though stable choices are available).

- When to Use: When you need strong anonymity, low block rates, and reliable performance for large-scale Google or e-commerce serp scraping proxies.

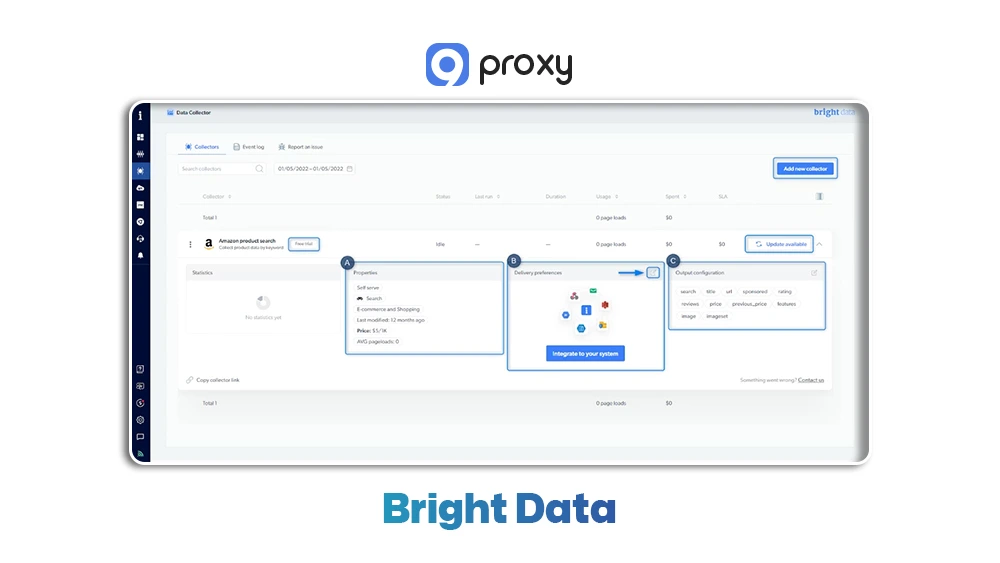

Bright Data

Bright Data (formerly Luminati) offers a huge global proxy network that includes Data Centre, Residential, ISP, and Mobile proxies. Their strong infrastructure and powerful Proxy Manager make them suitable for demanding scraping workloads.

- Pros: Very large IP pool, advanced Proxy Manager, high reliability, and excellent scalability.

- Cons: Pricing can be complex and expensive for high-volume tasks, and the platform may feel overwhelming for new users.

- When to Use: Ideal for enterprise-level scraping projects that need multiple proxy types and advanced management tools.

Oxylabs

Oxylabs is a premium proxy provider known for its Residential and Data Centre proxies, designed for large-scale public data collection. They have a strong focus on static ISP proxies, which are especially useful for keeping long and stable scraping sessions active.

- Pros: Large pool of clean Residential and Static ISP IPs, fast response times, and strong customer support.

- Cons: Premium pricing and less flexible than Bright Data’s pay-as-you-go plans.

- When to Use: Best for long-running Serp Scraping Proxy projects or any data collection task that needs stable, dedicated IPs with consistent speed.

NetNut

NetNut stands out because it sources its Residential IPs directly from ISPs worldwide instead of relying on peer-to-peer networks. This structure provides high reliability, strong speed, and genuine IP quality, which helps reduce blocks during scraping.

- Pros: Direct ISP-sourced residential network, fast connection speeds, and robust rotation for large-scale scraping.

- Cons: Smaller IP pool than P2P-based providers and mainly focused on B2B clients.

- When to Use: Ideal when you need high speed and stable IP performance for serp scraping proxies and general web scraping.

SOAX

SOAX offers a simple, easy-to-use platform with very detailed geo-targeting options, including city and mobile carrier selection. Their rotating residential and mobile IPs make them a solid mid-range option for users needing accurate and localised SERP data.

- Pros: Precise filtering by country, region, city, and carrier; clean mobile IP pool; reasonable pricing.

- Cons: Network stability can vary compared to top-tier premium providers.

- When to Use: Best for localised SEO or marketing tasks that need accurate geo-targeting from a dedicated Serp Scraping Proxy.

IPRoyal

IPRoyal is known for its budget-friendly pricing and offers both rotating and static residential proxies, along with affordable ISP and Data Centre options. It’s a popular choice for users who want reliable residential IPs without overspending.

- Pros: Very affordable, simple interface, supports sticky sessions, and provides a solid range of static proxy options.

- Cons: Smaller IP pool than top-tier providers, which can lead to occasional blocks on very large scraping projects.

- When to Use: Ideal for startups, individuals, or small-scale scraping tasks where keeping costs low is a major priority.

Choosing the Right Proxy for SERP Scraping

Choosing the right Serp Scraping Proxy means finding the best mix of speed, price, and anonymity. Because search engines are always improving their anti-bot systems, your proxy provider needs certain features to keep your scraping smooth and reliable. Here’s what you should look for:

- IP Pool Size and Diversity: Pick a provider with a very large and varied IP pool. A big pool helps you rotate often, avoid reused or flagged IPs, and keep your results consistent.

- Rotation Frequency and Management: Your provider should let you rotate IPs however you need, either on every request or with short sticky sessions. Automatic block detection and quick IP replacement are extremely helpful for any serp scraping proxies setup. For more practical insights and real-world implementations, you can explore Blog 9Proxy.

- Geo-Targeting Capability: You should be able to choose IPs from specific countries, states, or even cities. This ensures the SERP data you collect matches what real users see in those locations.

- Proxy Protocol Support (SOCKS5): While regular HTTP/HTTPS proxies work, SOCKS5 offers better privacy and handles more types of traffic, making it the stronger choice for SERP scraping.

- High IP Reputation: Proxies need to be clean and not previously abused. Residential and Mobile proxies usually have the best reputation, giving you higher success rates for long-term scraping.

How to Build a SERP Scraper (With Proxy Integration)

Building a dependable scraper that can get past search engine protections takes more than just writing code; it requires a clear plan built around your Serp Scraping Proxy setup. Below is a simpler, easier-to-follow version of the process:

Step 1: Proxy Selection and Integration

Start by choosing a proxy provider (such as 9Proxy). Get your proxy details (IP/Host, Port, Username, Password). In your scraper code, whether you use Python, Node.js, or another language, configure all HTTP/HTTPS requests to go through these proxies.

Step 2: Rotation Logic

Do not rely on one proxy for the entire job. Rotate through your list and switch IPs every 1-5 queries. If a request fails or returns a CAPTCHA, immediately change to the next Serp Scraping Proxy to keep your scraper running smoothly.

Step 3: Mimic Human Behaviour

Search engines look for bot-like patterns, so add random delays, rotate User-Agent strings, and manage cookies to make your behaviour look more natural and consistent with a real browser session. If you are building scripts with Python web scraping tools, these techniques become even more important to avoid detection and improve success rates.

Step 4: CAPTCHA and Block Handling

Build error handling into your scraper. If you hit a CAPTCHA or block page, mark that proxy as temporarily inactive and move on to the next one. If CAPTCHA appear too often, consider adding a third-party solver.

Step 5: Parsing and Storage

After you fetch the SERP HTML, use tools like BeautifulSoup, Scrapy, or similar libraries to extract titles, links, snippets, ads, and any other data you need. In some cases, handling browser-based restrictions may require routing requests through a CORS proxy to bypass cross-origin limitations when working with client-side scraping workflows.

Step 6: Monitoring and Health Check

Regularly track success rates and the health of each proxy. If more than 5% of your requests get blocked, slow down your scraping speed or upgrade to a better Serp Scraping Proxy pool to maintain stable performance.

Common Problems & Troubleshooting Guide

Even the best serp scraping proxies can face issues. Understanding how to handle these problems quickly is important for keeping your data pipeline running smoothly.

IP Blocks and Bans: This is the most common issue. It happens when an IP starts receiving CAPTCHA or incorrect results, which means it has been flagged.

Fix: Increase your IP rotation speed, use a stronger Serp Scraping Proxy pool such as Residential IPs, and add random human-like delays between requests.

Quality of Proxies: Low-quality or free proxies often cause slow speeds, high latency, and frequent disconnections. This reduces data accuracy.

Fix: Choose reliable, paid proxy providers like the ones mentioned above. Test your proxy list for speed and uptime before running any large job.

Handling Large-Scale Scraping: Working with millions of data points requires a strong infrastructure. Proxies alone are not enough to prevent system overload.

Fix: Separate scraping, parsing, and storage into different processes. Use distributed systems, queues, and asynchronous programming to manage tasks efficiently.

Best Practices for SERP Scraping

To keep SERP scraping stable and long-lasting, your scraper needs to operate carefully and behave in a natural way.

- Implement Smart Rotation: Do not rotate IPs in simple order. Use random rotation from your Serp Scraping Proxy pool so search engines find it harder to spot patterns.

- Vary Request Timing: Avoid fixed delays. Instead of always waiting 5 seconds, use random delays such as 3 to 7 seconds to imitate natural behaviour.

- Use Realistic User Agents: Use updated and common User-Agent strings that match real browsers and operating systems. Do not use default or easily identifiable scraper User-Agents.

- Respect robots.txt: Even though search engines often treat scrapers as bots, it is still good practice to respect the basic rules in robots.txt whenever possible.

- Cache Results: Cache SERP results for frequent keywords to avoid sending unnecessary requests. This saves proxy credits, speeds up your workflow, and reduces your load on search engine servers.

Real-World Applications of SERP Scraping

The data collected through a reliable Serp Scraping Proxy plays an important role in many successful digital strategies across different industries.

SEO Professionals and Ranking Monitoring

With accurate SERP data, you can track thousands of keyword rankings across multiple countries or cities at the same time. You can study how competitors write their title tags, descriptions, and featured snippets to understand why they rank well. You can also follow search trends and watch for new SERP features such as AI Overviews or Knowledge Panels.

E-commerce and Market Research

A strong Serp Scraping Proxy setup allows you to track competitor pricing by scraping Google Shopping results and other product listings. You can gather product reviews and ratings shown in SERPs to learn about customer sentiment. You can also spot new opportunities by analysing related searches and the “People Also Ask” sections.

Conclusion

The need for accurate, real-time data makes the Serp Scraping Proxy a vital tool for SEO and competitive intelligence. Reliable scraping depends on using high-quality, residential-grade serp scraping proxies together with smart rotation and strong anti-detection methods.

By following the setup steps and best practices outlined above, you can build a stable and scalable data pipeline while reducing the chance of blocks. For the best results, we recommend choosing a trusted premium provider like 9Proxy to ensure the anonymity and speed your projects require. Let’s start your data revolution today.